The First Paradigm in Robotics & AI Research: Lessons from Computer Engineering

Commoditization and end-to-end learning have consolidated robotics and AI. What's next for research labs?

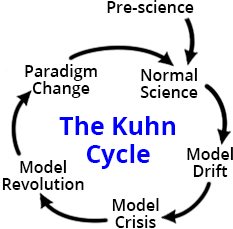

Thomas Kuhn wrote that scientific fields develop into dominant paradigms that characterize phases of productive but incremental research. The very existence of a paradigm is evidence to the maturation of a field.

For robotics, we may be in the midst of the first time this has ever happened.1 The start of our research careers resembled the “wild west” of emerging techniques and technologies, but ideas have converged more now. On one hand, robotic hardware has gotten good enough to see thousands of robots of getting shipped and used, by consumers and researchers alike. On the algorithm side, the bitter lesson and its corollary — hypothesized “scaling laws” — have provided a scaffolding around which progress can be evaluated. End-to-end behavior cloning policies seem like they can generalize to all sorts of tasks, and performance predictably improves with more data. We’ll refer to these two trends as commoditization and architectural convergence, and discuss how they shape the current paradigm below.

The establishment of this current paradigm has also had side-effects on the nature of research that may in themselves be setting us up for paradigm shifts. While it is a bit of an overreach to use the term “revolution” for robotics (as Kuhn did for science), such a shift would be pivotal for researchers and is worth understanding.

This article is co-written by Avik De and Chris Paxton, both robotics researchers with experience in academia as well as industry. Chris writes about AI and robotics, and Avik writes about robotics, computing, and AI.

Trends in Robotics and AI

1) Commoditization

Going back to 2013, Avik’s Ph.D. research included the development of an internal research robot, Minitaur:

It took a lot of (Ph.D. student) effort to build the infrastructure, but resulted in a unique development platform that was easy to program (as an Arduino), lightweight and relatively safe (5 kg), and capable of producing very agile and exciting-looking behaviors. There was nothing like it that you could buy. All in all, this endeavor to develop a new robot led to papers, cool movies to show in talks, and even a startup company.

In the decade after, four-legged robots started to get out of the research lab and into public consciousness. The show Silicon Valley had a Boston Dynamics Spot cameo in 2016, and robot videos designed to appeal to a broad audience, like dancing, started to appear. Four-legged robots were officially out of the lab and in the wild, and this led to increased expectations for what they should do. Stably walking around used to be cutting edge, but became table stakes. Expectations for specs such as reliability, battery life, compute capability, ruggedness drove designs to be more complex. It became much more difficult for a couple of researchers with minimal engineering experience to put together a new robot. Moreover, after Chinese company Unitree entered the market and dropped the asking price by almost 30x in 2021, it became not worth the time and dollars to even try.

The pre-paradigm period of lab-developed robotic hardware is being replaced by algorithm development for commoditized hardware.

We have seen this play out in several robotics research labs. DJI commoditized consumer drones aggressively from 2013 onward, making it hard to justify custom builds even for capability reasons. By the mid-2010s, labs doing serious flight research (e.g., Davide Scaramuzza’s group at University of Zurich) were exclusively using commercial platforms. ETH Zurich’s Robotic Systems Lab (which built ANYmal originally, and also STarLETH) now deploys their locomotion research on the ANYmal platform rather than building new hardware. Boston Dynamics has an article that talks about how commercial platforms let researchers hit the ground running.

Post-commoditization, researchers who want to demonstrate algorithms working on robots can reap the benefits. Humanoid research circa 2015 meant figuring out “how do we actually build these things and make them not fall over,” whereas post-commoditization, time can be spent on higher-level algorithms and methods — we refer to this phenomenon as “moving up the stack.”

A secondary effect of commoditization is that parts are now easier to get, and researchers can put together novel modular combinations of more mature components. The WidowX 250 Dynamixel-based arm from Trossen Robotics has become the default low-cost manipulation platform because it is cheap (~$3k) and can be used to create “leader-follower” setups for data collection. The ALOHA paper notes that the whole system with two arms costs ~$20k off-the-shelf. More recently, we have seen robots like the YOR assembled from off-the-shelf parts for research purposes. This effect enables new types and form-factors of robots to be built — we will return to this in the next section.

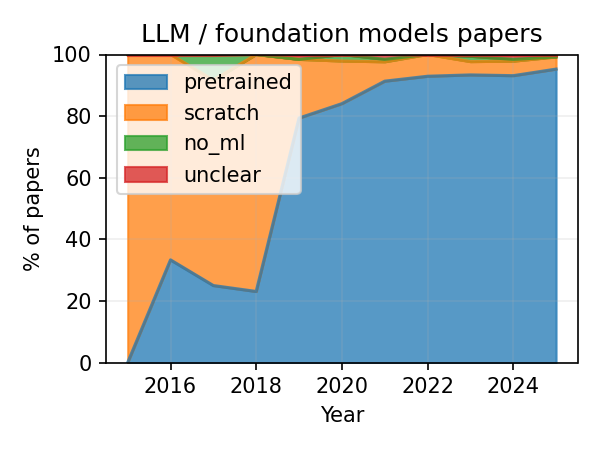

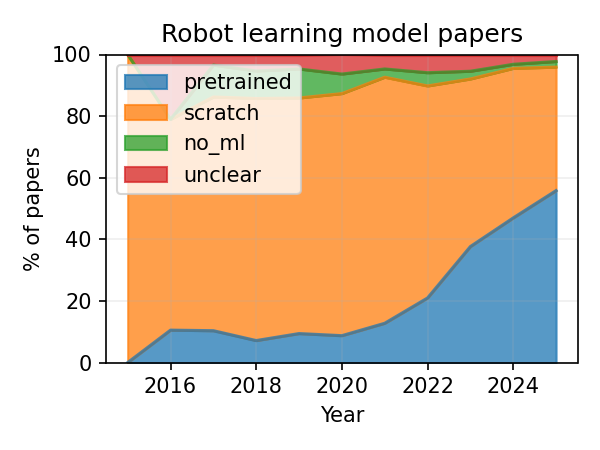

The same trend applies to non-hardware AI research. Frontier language models cannot really be trained by academic research labs any more — research in these areas moves to fine-tuning commercial models instead. The following plots were generated from arXiv data and confirm these trends toward pretrained model usage in research compared to building them from scratch.

The Qwen series of models by Alibaba have nearly taken over the research world by facilitating fine-tuning. In 2026, no academics would think of training their own language models or even vision-language models from scratch — why would you, when Qwen 3.5 can already beat anything that’s within reach of an ordinary academic lab?

Just like for robotics hardware, the pre-paradigm period of lab-developed models is being replaced by fine-tuning commercial models.

Here as well, there are research ideas which can be pursued by moving up the stack: agentic reasoning, reinforcement learning, world representations, novel model architectures, etc. Robotics models are not like language models; there are fewer real world benchmarks and it seems that even within the domain of end-to-end deep learning there are plenty of ideas left unexplored.

2) Architectural Convergence

Labs used to have a narrower focus where they could carve their niche, e.g. computer vision, legged locomotion, etc. However, for a robot to demonstrate complex sensorimotor tasks, you need all of the Sense-Plan-Act functions implemented in some way. If you subscribe to the bitter lesson, even the best computer vision algorithm, when connected using hand-crafted interfaces to a planner and other downstream systems, cannot compete with end-to-end systems. General-purpose manipulation / locomotion research is converging on behavior cloning and VLAs since it works well enough across many tasks, and performance improves with larger models and more data.

This trend has pushed many previously-diverse labs toward developing end-to-end models, which is a significant reduction in the diversity and richness in the research ecosystem. For better or worse, we appear to solidly be in a paradigm of behavior cloning with end-to-end models.

This has several benefits for researchers: they can build on existing work easily without re-inventing the wheel, and it creates a scaffolding for new contributions. However, it also has the side-effect of suppressing other schools of thought. In Kuhn’s somewhat ominous words,

But there are always some men who cling to one or another of the older views, and they are simply read out of the profession, which thereafter ignores their work. The new paradigm implies a new and more rigid definition of the field. Those unwilling or unable to accommodate their work to it must proceed in isolation or attach themselves to some other group.

How do research labs and out-of-paradigm ideas stand out in the face of homogenization and consolidation in this paradigm? We discuss what we can learn from computer engineering in the next section.

What we can learn from Computer Engineering

By necessity, computer engineering has always been a bit ahead of the same technology curve as robotics. After all, we needed the chips to facilitate computations needed for robots to work.

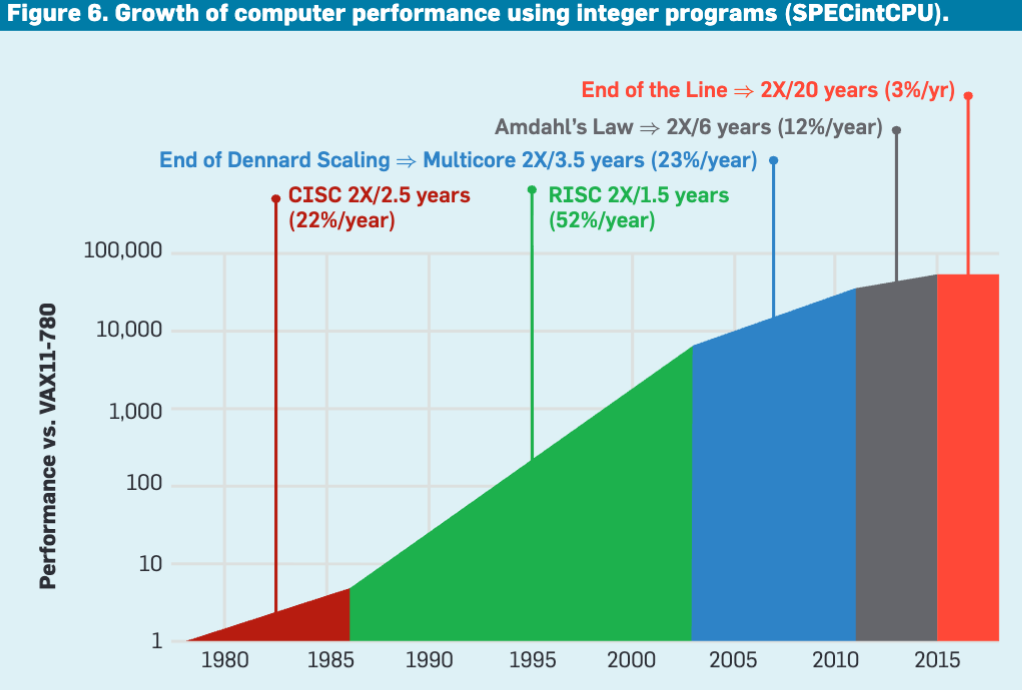

We saw there a similar commoditization trend, with hardware complexity outgrowing what a research lab could build. The initial university fab era was anchored by DARPA’s VLSI Project, which produced BSD Unix, the RISC concept, and MOSIS (a shared fab for academia). Once that era ended, academic research pivoted to what could be done without a fab.

As a response, computer engineering therefore shows a good set of examples of moving up the stack (transistors → meta-design tools and ISAs). Circa 2010, rather than building chips, Krste Asanović’s group at Berkeley designed the open RISC-V ISA explicitly motivated by the problem of proprietary architectures impeding academic research. With Chisel (Berkeley), academics built better tools for designing chips, by expressing hardware designs in a high-level language, and it became the foundation for most RISC-V implementations.

In addition, CPU architectures converged to x86 for desktop and ARM for mobile because they worked well enough for most workloads, and design costs could be amortized across different applications — a general-purpose computing paradigm.

Hennessy and Patterson’s 2017 Turing Award lecture argued that the post-Dennard-scaling era opens up a new window for research in domain-specific accelerators, where the design space is exploratory again. Coincident with the success of deep neural networks, the last few years have seen a Cambrian explosion in AI accelerators, ushering in much more innovation in computer architecture and silicon than was possible in CPUs.

In other words, computer engineering’s paradigm shift resulted in domain-specific diversification.

How do these apply to robotics and AI?

Just as chip fabrication leaving academia didn’t end computer architecture research, robotics research will find a home in core algorithms, training methodologies, and novel architectures. While papers can continue to be written on new methods and algorithms, unfortunately, the flashy demonstrations (important for fundraising and PR) may go out of lab reach. Similar to how ChatGPT capitalized on published transformer research, companies will capitalize on published public-domain research. It may become crucial to have a credit mechanism for academics for commercial usage of their work (this is not covered by academic metrics such as h-index).

The largest robotics companies are converging on general-purpose humanoids, optimizing for the broadest possible applicability and commercial value. By analogy to computer engineering’s domain-specific diversification, the next productive frontier for academic labs may be task-specific robots: surgical, agricultural, soft robots, etc., which diverge enough from general-purpose designs to make bespoke solutions worthwhile. A positive side-effect of the commoditization of hardware components (like actuators, IMUs, perception systems like the Kinect) all come together to facilitate this kind of development.

The Future

While the external perception of robotics and AI research is that we are undergoing a revolution today, the internal view is more consistent with commoditization and convergence. This paradigm has had a lot of positive side-effects, like establishing a framework and shared infrastructure, but also some serious downsides, like stifling research that doesn’t fit the mold.

In response, we already see the reality of robotics research moving up the stack, and we will potentially begin to see examples of domain-specific diversification if the largest companies with the largest datasets corner the end-to-end behavior cloning approach.

Beyond that, it’s too early to predict if there is a paradigm shift coming. Kuhn says on this topic:

Sometimes a normal problem, one that ought to be solvable by known rules and procedures, resists the reiterated onslaught of the ablest members of the group within whose competence it falls. On other occasions a piece of equipment designed and constructed for the purpose of normal research fails to perform in the anticipated manner, revealing an anomaly that cannot, despite repeated effort, be aligned with professional expectation.

Will there be a “piece of equipment” or “normal problem” whose unexpected result paves the way for the next robotics revolution? Optimistically, it seems like the current paradigm still has legs for a little while longer, but there is already work at the fringes looking toward the next set of leaps, like world model research, neuromorphic computing, etc. We’ll be writing about these topics over the coming weeks and months; stay tuned!

If you enjoyed this post, please like (❤️) and restack — it helps others find my writing. Subscribe to receive new posts. All of this is greatly appreciated.

While originally intended for scientific fields, the idea has been extended to broader technological fields.

On the topic of IL/BC/VLAs dominating manipulation and locomotion. I might be wrong, but I was under the impression that despite recent advances these two application fields are still separate in their approaches? For example, I haven't seen any VLAs full control a whole loco-manipulation task, giving action chunks for both the arms and legs. Vice versa, the Omni-* related lines of works (OmniXtreme, OmniRetarget, similar works not named Omni-...) use IL/BC for locomotion and even gross loco-manipulation tasks, but haven't been able to cross over into fine-grained manipulation that VLAs dominate.

Maybe it's just cope on my end, but I think (or hope) that there's valuable research to be done in domains in which we don't have ample data (and might never will), such as control policies for non-humanoid morphologies. Perhaps, despite the hype on "one policy to control them all" there will be specialization in morphology control for applications? Maybe that's wishful thinking lol.

Looking forward to more of these articles!